Cloud penetration testing: Not your typical internal penetration test

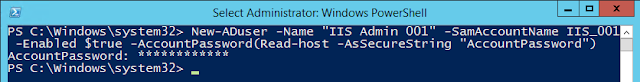

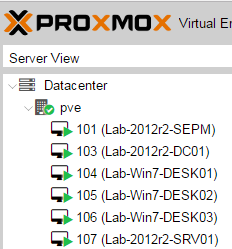

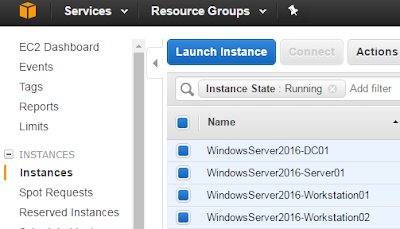

There seems to be a common path for experienced penetration testers who are thrown into the world of cloud penetration testing. I'm talking about internal (aka assumed breach) tests, where the goal is to demonstrate the impact of a compromised user with access to the cloud or a compromised application in the cloud. The path usually starts with ignorance, is followed by total confusion and questioning everything you know about penetration testing, and ends with… well, it never ends. Why? Because the cloud providers and the cloud native technologies running in these clouds keep evolving at a remarkable rate. There's always more to learn and more attack paths waiting to be found. I hope walking through my own path and defining the stages of ignorance and awareness I encountered in a playful way might help others progress through the early stages more quickly than I did. Level 1: This is just like any other Internal Penetration Test, right? You connect to your assumed breach sta...